Turn idle Macs into an

AI compute fleet

Your spare Mac has 36GB of RAM doing nothing. Run DeepSeek-R1 70B on the Studio, FLUX image gen on the MacBook, Qwen3-ASR transcription on the Mini — all through one endpoint. Fix that.

Your spare Mac has 36GB of RAM doing nothing. Run DeepSeek-R1 70B on the Studio, FLUX image gen on the MacBook, Qwen3-ASR transcription on the Mini — all through one endpoint. Fix that.

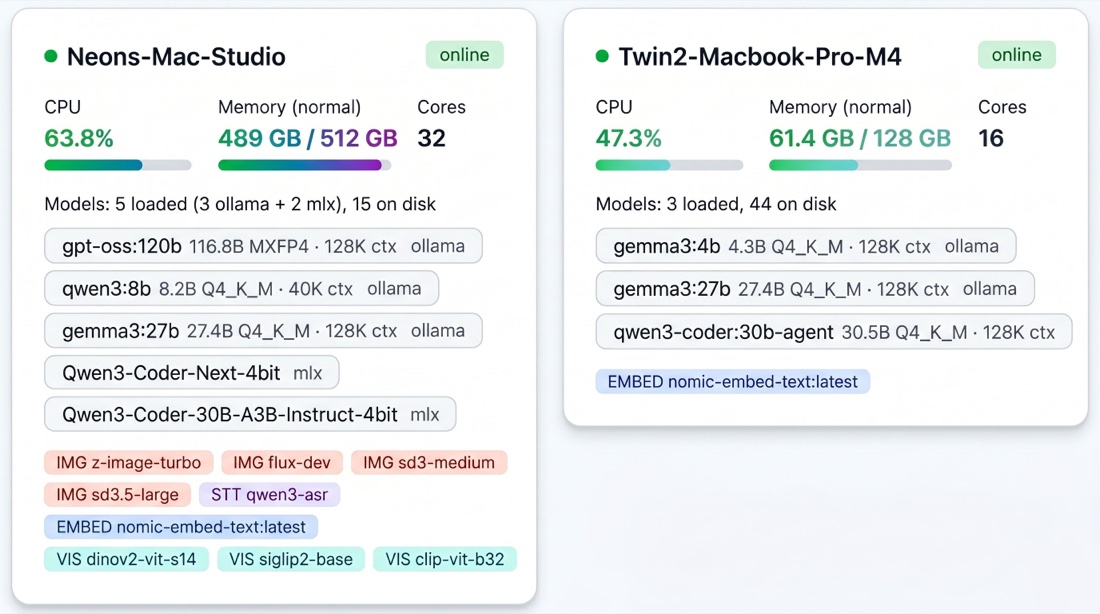

Every node, every model loaded, every image-gen / speech-to-text / embedding / vision service ready to route — one view. No ops console to build. No Grafana to wire up.

gpt-oss:120b and MLX coding models, and a MacBook Pro M4 running Gemma. Both visible from one URL.

Sound familiar?

You're running Aider, CrewAI, OpenClaw, or other AI agents. Cloud API bills hit hundreds a month and keep climbing. Every token costs money. Every request leaves your network.

You switched to Ollama on your Mac. Free, private, fast. But now you're constrained to a single device. Requests queue up behind each other. Larger models need more RAM than your laptop has. Agents stall waiting for inference.

Your Mac Studio with 256GB. Your old MacBook Air with 16GB. Your Mac Mini in the closet. All that memory and compute, doing nothing. Herd connects them all into one endpoint. Big models route to the machine with the most memory. Small models run on the lightweight device. Every machine contributes what it can.

Intelligent routing that gets smarter the longer it runs. Every component exists to serve one thing: getting the best response as fast as possible.

Thermal state, memory fit, queue depth, latency history, role affinity, availability trend, and context fit. Every request goes to the best machine.

Transparent retry on node failure before the first chunk. Client-specified fallback models. Holding queue when all nodes are busy.

mDNS auto-discovery. Nodes find the router on the LAN automatically. No config files, no service registries, no manual IP addresses.

8-tab live dashboard with SSE. Fleet overview, trends, model insights, per-tag analytics, benchmarks, health, recommendations, and settings. Multimodal type badges and per-node capability matrix. All backed by SQLite.

168-slot behavioral model learns each device's weekly patterns. Meeting detection pauses inference when you're on a call.

OpenAI-compatible endpoints for chat, images, and transcription. Plus native Ollama format. Drop-in replacement for any existing client, framework, or agent pipeline.

Route LLMs, embeddings, image generation, speech-to-text, and vision across the fleet. Capability-aware — image requests only go to nodes with mflux, transcription only to nodes with Qwen3-ASR.

Auto-detects chain-of-thought models like DeepSeek-R1 and inflates token budgets by 4×. Diagnostic headers show exactly how thinking tokens were spent.

Auto-discovers fleet capabilities, selects an optimal model mix to fill available memory, and benchmarks LLMs, embeddings, image gen, STT, and vision together. Per-model and per-node charts.

Measures actual token usage per model, recommends optimal context sizes, and auto-adjusts to reclaim wasted VRAM. Most models use under 5% of allocated context — Herd fixes that.

One fleet, five model types. Every request routes to a node with the right capabilities.

Chat, completion, reasoning. Smart routing by memory fit and model size.

Vector search and RAG pipelines. Route to nodes with embedding models loaded.

Text-to-image via FLUX. OpenAI-compatible endpoint. Routes to nodes with mflux installed and GPU capacity.

Audio transcription routed to capable nodes. OpenAI Whisper-compatible endpoint.

Image understanding via multimodal models. Send images with prompts, get text descriptions and analysis.

The scoring engine evaluates every available node on 7 dimensions before routing. The system learns from every request and improves over time.

From client request to streamed response in milliseconds. Every step is traced, logged, and queryable.

Client hits any endpoint — chat completion, image generation, transcription, or embeddings. The request is normalized and routed by type.

The scoring engine eliminates unhealthy nodes, scores survivors on 7 signals, and selects the best. Fallback models are tried if the primary isn't available.

The request enters a per-node:model queue with dynamic concurrency. The queue manager balances load and auto-rebalances if conditions change.

The streaming proxy forwards to Ollama. If the node fails before the first chunk, auto-retry kicks in with a different node. Format conversion (SSE / NDJSON) is transparent.

Every request is traced to SQLite. Latency data feeds back into the scoring engine. The fleet gets smarter with every request it serves.

Ollama Herd speaks the Anthropic Messages API. One env var redirects Claude Code CLI to your local fleet — agentic coding with your models, your hardware, your data. No rate limits, no per-token bills, no prompts leaving your network.

Includes three-layer context management that fixes the "Claude Code breaks at 30K tokens" failure mode on local Qwen3-Coder models. Per-tier model routing maps claude-haiku-* to a fast Ollama model and claude-sonnet-* / claude-opus-* to an 80B MoE via MLX — configurable via FLEET_ANTHROPIC_MODEL_MAP.

One base_url change connects any framework. Ollama Herd is the orchestration layer, not a replacement.

Any client that supports a custom OpenAI, Ollama, or Anthropic Messages base URL works out of the box.

Beyond LLMs — also routes image generation (FLUX via mflux) and speech-to-text (Qwen3-ASR) to capable nodes.

Wondering about LM Studio's LM Link? LM Link connects your Macs to each other. Ollama Herd routes across your whole team's mixed fleet — Mac, Linux, Windows, any Ollama or MLX node — with scoring, context management, and team admin. See the detailed comparison →

Multimodal routing, smart benchmarking, and dynamic context optimization are shipped. Now we're building an agentic router — a fleet that doesn't just wait for requests, but generates its own work, learns your patterns, and uses idle compute proactively.

500 MacBooks with Apple Silicon. Tens of terabytes of unified memory. Sitting idle during meetings, after hours, and weekends. Ollama Herd turns your existing hardware into a private AI compute platform — LLM inference, image generation, transcription, and embeddings — at zero additional cost.

SSO, RBAC, audit logging, compliance dashboards, fleet management, and SLA support. Everything enterprises need to run a full AI stack on the hardware they already own.

Contact Us →Stop leaving compute on the table. Start herding.